Automating Legal Work with Lupl and Claude

Every law firm has the same problem. The work about work — what’s happening on a matter, what’s overdue, who’s responsible — still lives in Word tables and Excel trackers. Systems of record exist for documents and for billing. But for the work in between? Manual. Disconnected. Invisible.

That’s the gap Lupl was built to fill. And it’s the reason that connecting Lupl to an agentic AI changes what’s actually possible.

In a recent live session, we ran through what this looks like in practice: matter setup in under two minutes, engagement letters drafted and assigned automatically, fee analysis as a live interactive dashboard, matter trackers that update themselves from emails. We ran every use case live on real data, all controlled via natural language from within Claude.

Lupl connects to Claude via MCP — the Model Context Protocol, an open standard supported by Claude, Microsoft Copilot, Perplexity, OpenAI, and a growing list of others. Whichever AI tool your firm uses, the integration works the same way.

—

Why legal AI needs a system of record

The industries moving fastest on agentic AI all made the same investment first. Engineering teams have Linear and Jira — structured systems where agents can read the current state of work, take action, and log what they’ve done. Sales teams have Salesforce. These systems give agents context: not just what they’ve been asked in a chat window, but what’s actually happening across the whole portfolio of work.

Legal doesn’t have that. Or didn’t. Most firms are still managing matter plans in Word documents and tracking deadlines in Excel. You can layer an AI assistant on top of that and get some value — drafting help, research, summarisation. But you can’t automate processes. You can’t have an agent proactively flag a risk it spotted in a client email. You can’t give the AI the context it needs to be genuinely useful across a matter’s entire lifecycle.

Lupl is that missing layer. Connected to an agentic AI via MCP, it gives the AI structured, live access to what’s happening on each matter: the work streams, task statuses, responsible parties, what’s next. The AI can read that context, act on it, and write back to it.

“You cannot deploy agents at scale when people are tracking the status of things in Word documents and manual trackers.”

—

How the connection works

The integration uses MCP, now the de facto open standard for connecting AI agents to external systems. Setup is straightforward: authenticate, connect Lupl’s MCP server to your AI tool of choice, and the agent has secure, permission-scoped access to your matter data.

Permission-scoped matters. The connection is OAuth-based. Claude — or Copilot, or Perplexity — can only see the matters a user is already entitled to see in Lupl. If a lawyer doesn’t have access to a matter in Lupl, they can’t access it through the AI either. The security model doesn’t change; it extends into the AI layer.

Token usage is also lower than firms expect. Lupl holds structured data about the work: tasks, statuses, deadlines, work streams. Not documents. The AI isn’t reading thousands of files to answer a question about a matter. In a full demo session, roughly 1% of a standard Claude subscription was used.

Works with: Claude (claude.ai, Cowork, Claude Code) · Microsoft Copilot · Perplexity · OpenAI · Any MCP-compatible AI tool

—

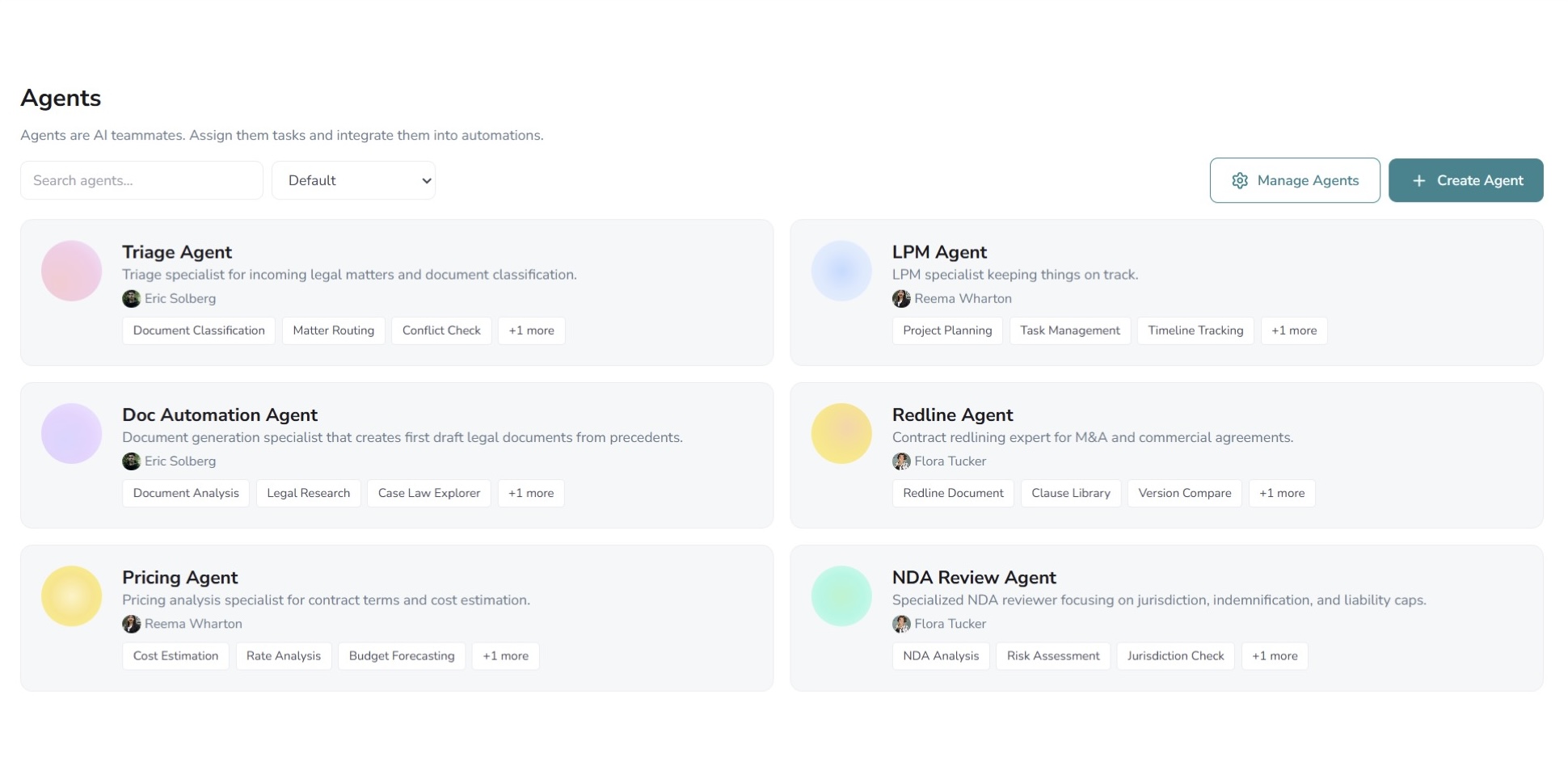

Six things firms are doing with this today

1. Daily matter briefings

A partner starts their morning by asking the AI for a briefing. Within seconds it pulls live data from Lupl: which matters need attention, where the risks are, what’s outstanding, what’s coming up this week.

In our session, we pulled up a matter with litigation exposure worse than expected, and another with an outstanding sanctions check nobody had actioned. The AI surfaced both without being asked, then offered to run a real-time web-based sanctions check on the spot.

A status sweep that would normally require opening multiple systems and manually scanning each one takes seconds.

2. Matter setup — from contract to live matter in under two minutes

A client sends a new instruction: a dispute arising from a content licensing agreement. Drop the document into the AI chat, ask it to set up a matter.

The agent reads the contract, identifies the governing arbitration rules (SIAC, Singapore), asks one clarifying question, then searches Lupl’s template library for the right playbook. It finds the Singapore arbitration template and builds out the full matter — work streams, tasks, procedural steps — without a single click in Lupl.

A senior associate would typically spend 30–60 minutes on this. We did it in under two minutes. The agent didn’t just create tasks either — it reasoned about which template fit, applied the matter context, and only asked for clarification where it genuinely needed it.

3. Engagement letters — drafted, assigned, and filed

With the matter live, we typed: “Draft me an engagement letter.”

The agent reached into the firm’s document automation system, identified the correct template, determined what variables needed populating, and generated a complete draft — pulling party names, dates, and scope directly from the matter context in Lupl. The draft was saved to iManage. A review task was assigned to the relevant fee earner, who got an automatic email notification with a link to the file.

No form-filling. No switching between systems. No manual task creation.

“This wasn’t vibe-drafted. It was drafted using the firm’s template — and the agent figured out the variables itself.”

4. Fee analysis — interactive pricing without a spreadsheet

We asked it to generate a fee quote for a dispute with a value range of £2m–£10m. The agent used the matter context from Lupl and a pricing skill incorporating the firm’s rate cards to build a live, interactive dashboard inside the conversation.

The dashboard compared fixed fee, capped fee, and hourly rate structures side by side. Adjusting a client discount slider updated projected margins across all three options in real time. The agent flagged its recommended approach and broke costs down by phase and team level.

Fee conversations happen constantly — in pitches, scope changes, multi-jurisdictional matters. Partners had this kind of analysis sitting in a spreadsheet someone built years ago, or not at all.

5. Driverless LPM — trackers that update themselves

“Driverless LPM” is the idea that matter trackers should update themselves, rather than relying on fee earners to do it manually. We walked through two ways to make that happen.

Via AI chat: paste a client email in, ask the agent to update the relevant matter. It reads the email, compares it against the existing matter structure in Lupl, and makes the appropriate updates without being told what to change.

Via email CC: even simpler. CC a Lupl agent on an email. No instructions. The agent reads it, understands the context, and updates the matter in the background — the way you’d hand something off to a capable LPM.

Fee earners stop spending time on tracker admin. Risks surface automatically. The matter plan stays current without anyone owning it.

6. Directory submissions

Chambers and Legal 500 submissions are perennially left too late. The data that goes into them — matters worked on, outcomes, team involvement — already exists in Lupl. Combined with each directory’s format requirements, an AI agent can draft submissions using live matter data.

What typically takes hours of partner and BD time becomes a first draft in minutes. The same logic applies to pitches, capability statements, and any document that draws on matter history.

—

Skills: how firm knowledge gets into the agent

Throughout the session, we kept coming back to “skills” — plain-English instruction files that tell the agent how to handle specific tasks. A matter setup skill. A pricing skill. A daily briefing skill.

Skills are written by humans — a knowledge management team, an innovation function, a senior lawyer who knows how things are done at the firm — and deployed firm-wide. Fee earners don’t write prompts. They just ask, and the agent follows the firm’s methodology.

They’re also an open standard, supported by Claude, OpenAI, and others. Skills built today are reusable regardless of which AI tool the firm standardises on. Anthropic has even published a skill-creation skill — a prompt that extracts tacit knowledge from subject matter experts and turns it into a reusable file.

—

A few practical notes on security and deployment

OAuth-based access means the AI only sees what the authenticated user is already permitted to see in Lupl. No broader data exposure.

Claude is already in active use at a number of law firms. The claude.ai chat interface is the most common entry point. Claude Cowork and Claude Code — which interact with operating systems more directly — are being evaluated separately by most firms.

iManage and NetDocs are building MCP capabilities. In the meantime, API integrations are already possible without MCP — firms don’t need to wait on DMS vendors to get started.

All of this applies equally to Copilot, Perplexity, or any MCP-compatible AI. The firm’s choice of AI tool doesn’t affect the Lupl integration.

—

The bottom line

These use cases aren’t proofs of concept. They ran live, on real matter data, in real time. Matter setup. Document automation. Fee analysis. Driverless LPM. Client reporting. Directory submissions. All of it available now, on infrastructure that exists today.

What makes it work isn’t any single AI model. It’s the combination of an agent with live, structured access to the work. The industries furthest ahead on agentic AI all made the same investment first: a proper system of record. Legal is at the start of that journey.

“There are so many processes in law firms that — with the combination of an agent and a system of record for the work — could make everybody’s lives a lot easier.”

More legal tech insights we think you'll love

Mistakes happen in eDiscovery – The real test is how PMs handle them.

From wrong productions to missed QC steps, eDiscovery mistakes happen....